May 3, 2026

Let me tell you what happens in the first ninety seconds of a case study walkthrough.

The interviewer stops evaluating the work.

They start evaluating you.

Not your Figma skills. Not your colour choices. Not whether the final screen looks polished enough to go on Dribbble. They're listening for something underneath all of that — and most aspiring designers don't know what it is until they've already left the room.

Spoiler: it has nothing to do with how clean your process looked. It has everything to do with how honest you were about where it got messy.

This post is the thing nobody tells you before you walk in.

A small confession before we get into it.

I've been on both sides of this table.

I've sat across from students who had genuinely brilliant work — work that deserved an offer — and watched them talk themselves out of the room. Not because they weren't good enough. But because nobody had ever told them what was actually being listened for.

I've also been the candidate during my early days. I know what it feels like to walk out of a room convinced you nailed it — and then hear nothing for two weeks.

That silence is the worst kind of feedback. Because it gives you nothing to fix.

This post exists because of that silence.

Everything here comes from real conversations — from mentoring sessions on ADPList, from debriefs with students, from the patterns I kept seeing repeat themselves across portfolios, across design institutes teaching the same frameworks on loop.

I'm not writing this from a distance…

…I’m writing it from the other chair

What Students Think Interviewers Want

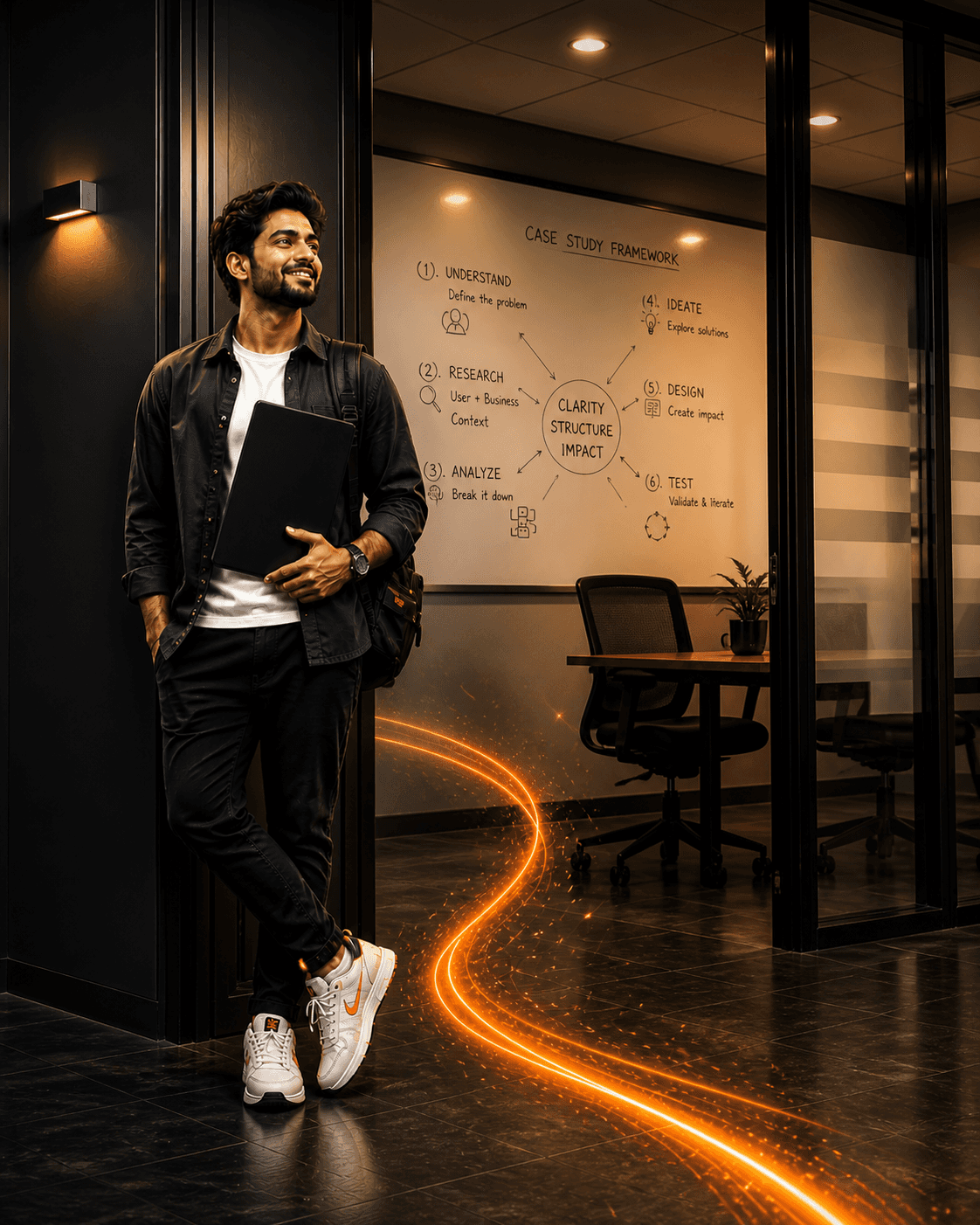

UI/UX designers are particularly susceptible to this trap. This stems from design education’s focus on visual communication over verbal.

Consequently, tools like the double diamond, Behance portfolios and polished case decks are optimised for visual appeal rather than practical application.

A successful interview involves a clean process, confident delivery and a double diamond walk. Research provides insights that guide solution development. The final slide showcases positive metrics demonstrating success.

It looks good. It sounds rehearsed…

…And that's exactly the problem.

The polished deck is the most common reason good designers don't get the job.

Not because the work is bad — but because the presentation makes it impossible to see the designer inside the work…

What Interviewers Want to Actually Hear

There's an unspoken checklist running in every interviewer's head while you talk.

It sounds something like this:

→ Did they own the mess — or hide it? Every real project has a moment where something broke, a stakeholder pushed back, or the initial direction turned out to be wrong. The designer who names that moment — clearly, without defensiveness — is the one worth hiring. The one who presents a frictionless journey is the one who either didn't face reality, or isn't ready to talk about it.

→ Can they say why — not just what? "We chose this pattern because it tested well" is a what. "We chose this pattern because the user base skewed 55+ and familiarity outweighed novelty in that context" is a why. The second answer tells an interviewer you understand the reasoning layer of design — not just the execution layer.

And that’s important because you know now - AI has become too good at execution so human judgement/reasoning is what matters now. Read more

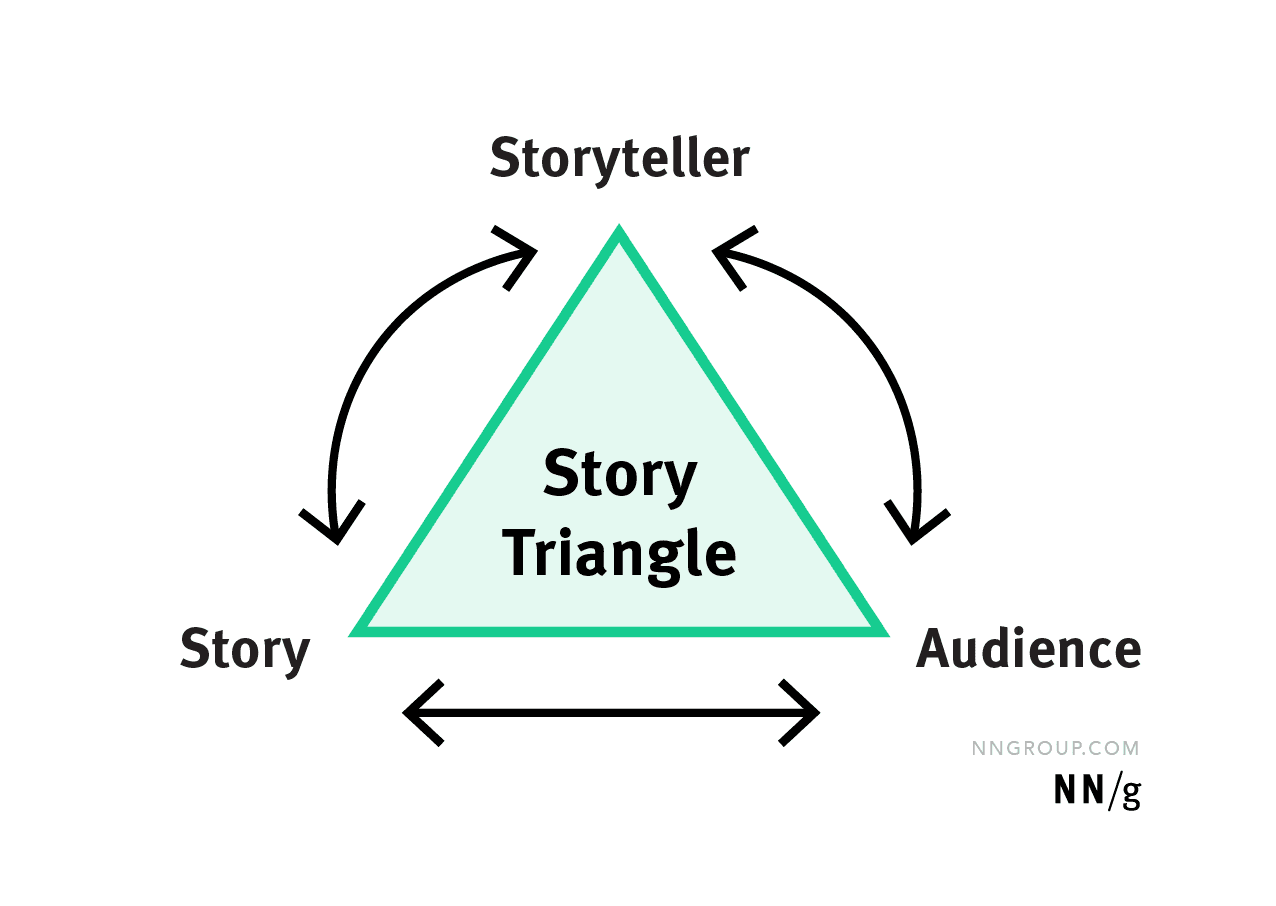

→ Are they presenting a project — or telling a story? A project has deliverables. A story has a perspective. Interviewers remember perspectives.

Conclusively, they forget deliverables by the time you've left the building.

The stereotype of the hype-crafted, post-pandemic portfolio — the one most design institutes were producing on loop — has started to fade.

What's replacing it is something harder to fake: a point of view.

The AI Layer Several are not Talking About

Here's the signal that's quietly separating strong candidates from forgettable ones post covid with stereotype voice — and most students don't know it exists yet.

Interviewers can tell when AI did the thinking for you.

And they can tell when it did the thinking with you as ally and partner..

The difference is audible. And it shows up most clearly in how you talk about your process.

Several UI/UX designers are among the least likely to have built the enterprise secondary research habit — and that's not a character flaw. It's a curriculum gap. Most design institutes taught discovery as user interviews.

Research meant talking to people. It rarely meant mapping a regulatory landscape, synthesising competitor benchmarks, or building domain fluency before the first stakeholder conversation ever happened.

AI makes that gap closable in hours. But only if the designer knows the gap exists.

Here's what that actually looks like:

Secondary research synthesis in enterprise design, walking into a stakeholder meeting cold is a credibility problem. Before a single discovery call happens, the strongest designers have already mapped the landscape — industry reports, compliance requirements, competitor benchmarks, legacy system constraints, domain terminology and what all required to gauge understanding of the business…

AI makes that synthesis faster than it's ever been. But the interviewer is listening for whether you knew what to look for — not just whether you ran a prompt.

The student who says: "I used AI to pull together secondary research on the regulatory environment before our discovery calls — so I could ask sharper questions, not basic ones" — is signalling domain awareness, systems thinking, and respect for the stakeholder's time. Most freshers don't even know that muscle exists yet.

Requirement synthesis — and interrogating the gaps — AI can pull requirements together quickly.

What it cannot do is know what's missing…

The enterprise context — the workaround that's been running for six years, the compliance clause that overrides the user preference, the internal politics that make a technically correct solution politically impossible — that's human knowledge. And the interviewer is listening for whether you knew to look for it and the design decision-making to drive forth.

First-pass structure — rebuilt with real context. Letting AI generate the initial structure of a case, then redesigning it with real user and business context, is a legitimate and powerful workflow. But you have to be able to articulate the difference between what AI framed and what you changed — and why.

The line that makes an interviewer lean forward:

"AI gave me X. But I challenged it because the enterprise context required Y — and here's what that decision changed downstream."

That sentence does three things at once :

It shows AI fluency

It shows critical thinking.

And it shows that you understand the difference between a generated answer and an informed one.

Two Versions of the Same Walkthrough

Here's what the difference sounds like in practice.

Version A (a UI/UX designer's default mode) — sounds polished, loses the room:

"We started with user research, identified three key pain points, and designed a solution that addressed all of them. The final design tested well and the client was happy."

Clean. Confident. BUT Forgettable ❌

Version B 🎯— the same designer who learned to reframe.

Same background. However, different awareness…

Sounds honest, wins the room: "We started with secondary research — used AI to synthesise the regulatory landscape before our first stakeholder call, so we weren't asking questions the client expected us to already know the answers to.

Discovery surfaced a pain point we hadn't anticipated.

We'd initially designed around workflow efficiency, but the real friction was trust — users didn't believe the system would save their work.

We had to go back and redesign the information architecture around that insight. The client pushed back on the timeline. We negotiated scope. The final design wasn't everything we'd planned — but it was the right thing to ship first."

That's a story. That's a perspective.

That's a designer who knows how work actually happens.

The Line That Separates Good From Great

AI fluency in 2025 isn't about how much you used it.

It's about whether you can explain your judgment around it.

What did you accept? What did you question? Where did the business context, the user reality, or the enterprise constraint override what the model suggested — and what did you do about it?

That's the new craft. And right now, very few students are ready to talk about it clearly.

The ones who are — they're the ones who leave the room with an offer.

When the Walkthrough Still Didn't Work — A Retrospective

Let's say you did everything right.

You named the mess. You led with why. You talked about your AI usage specifically and honestly. You told a story instead of presenting a project.

And you still didn't get the call.

Here's what to do with that.

Step 1 — Reconstruct the room, not the deck. Don't go back and redesign your slides. Replay the conversation. Where did the interviewer's energy shift? When did the follow-up questions stop? When did the silence feel different?

That’s your data.

Golden Tip: Remember to do it as soon as possible and write it somewhere easy to track later. That’s because your muscle memory is still active, but soon conversations get faded away with time, and you won’t be able to recall them later.

Step 2 — Ask for the one thing most students never ask for. Not "any feedback would be helpful." Something like — "Could you tell me the moment in the conversation where I lost you?"

Most interviewers won't answer. But some will.

And that one honest sentence/reply/insight is worth more than ten mock interviews…because it is coming from good experience.

Step 3 — Separate the work problem from the communication problem.

These are two different diagnoses. Two completely different fixes.

Here's the honest test: if a senior designer looked at your case study without hearing you present it — would they still see the thinking? If yes, the work is solid. The story needs work. If no, go deeper into the work itself before you touch the presentation.

Most candidates conflate the two and end up polishing the deck when they should be strengthening the decisions inside it. Or worse — they rebuild the whole project when the work was never the problem.

Fix the right thing. The other one can wait.

Step 4 — Document it like a design failure.

What was the brief? What did you ship? What signal told you it didn't land? What would you change in the next iteration?

Designers know how to run retrospectives on products. Most never run one on themselves.

The interview that didn't work isn't a dead end. It's a research session.

Treat it like one.

Three things to carry into your next case study walkthrough:

1. Name the moment things broke. Not to show weakness. To show you were paying attention.

2. Lead with why, not what. The decision is the data point. The reasoning is the story.

3. Own your AI usage — specifically. Not "I used AI to help with research." Tell them what it gave you, what you interrogated, and what you changed. That specificity is the signal.

The interviewer isn't looking for perfection. They're looking for someone who knows the difference between their own thinking and borrowed thinking —

… And isn't afraid to say so out loud.

——

P.S. — Record yourself walking through your next case study. Count how many times you said what you did versus why you did it.

If your whats outnumber your whys — you're presenting a project.

If your whys outnumber your whats — you're telling a story.

The room hires the second one most of time.